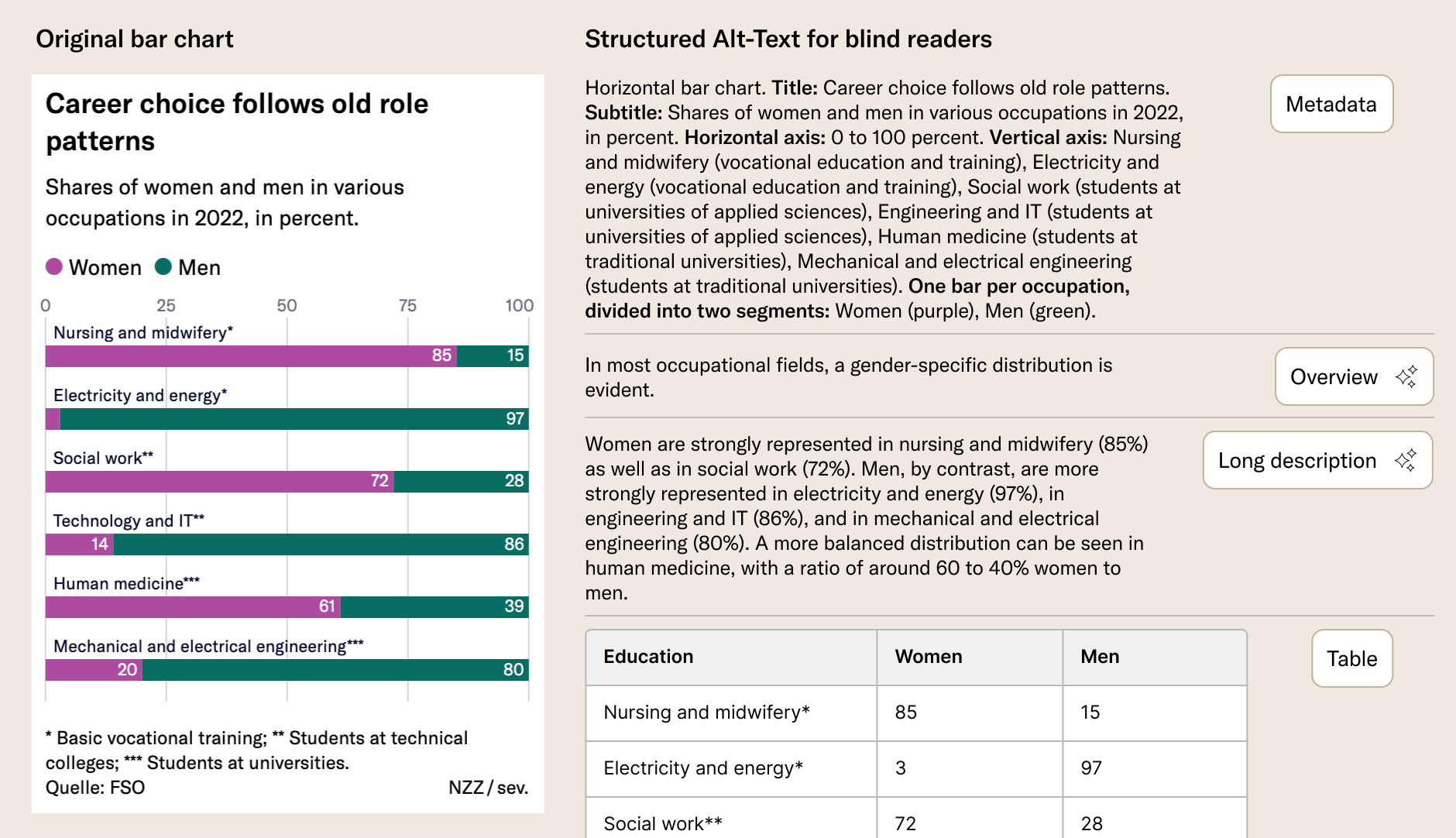

Where sighted readers see a chart, blind readers often get nothing. The reason: chart authors rarely have the time to write good alt-texts. Can we automate this now?

When I passed a screenshot of a simple line or bar chart to a current multimodal AI, I got a pretty decent description of its contents. At least that’s what I thought. But is it actually helpful for blind readers?

With this question I went to the Institute of Interactive Technologies at the FHNW. Alessia Vannini and Julia Locher, two Data Science bachelor's students took on the challenge.

Initial tests and a literature review indicated: the texts were too long and unstructured to be useful. Also, the models started to interpret trends in the data. Sometimes they were interesting, but they were undesired.

Earlier work on generating Alt-Text for Visualization had relied on a dataset of 35 000 Statista Charts and corresponding «gold-standard» descriptions. In tests with blind people, Alessia and Julia found though, that these were not in the format preferred by readers with visual impairments.

So they created their own. In collaboration with a linguist and blind people recruited through the newsletter of the SBV, they wrote 41 gold-standard-texts matched to NZZ charts.

Then they iterated on prompts to have Gemini 2.5 Flash generate descriptions that matched the gold standard as well as possible. The similarity was measured with SBERT. They also set upper limits on the length of these texts.

Their findings suggest that much of the text can be generated without the help of AI. Blind readers appreciate to have the data table available as well as chart metadata like the title, axis domains or categories. LLMs contribute a description of trends, comparisons, outliers, etc. Structure is extremely important to blind readers as this allows for quick navigation. They want to be able to scan a chart and not be required to listen to a long text.

The study has also shown some limitations of current AI models – as of December 2025. Hallucinations have been a smaller problem than expected. But charts with many categories or annotations have at times resulted in wrong interpretations. The chart types tested were bar charts, stacked bar charts and line charts.

Finally, the students experimented with LLM-as-judge, which is mentioned in the literature. The idea is that another AI judges the output of the generator and produces a numerical score. Results often did not match human assessment though.

The full report, gold standard texts and their corresponding charts can be found on Github